Part 1: Introduction to Epistemology

-

What is it? The lecture introduces "Epistemology" (from the Greek word epistēmē meaning knowledge), which is the philosophical study of human knowledge—its nature, origin, and limits.

-

Introspection: Philosophers often study knowledge through "Introspection," which means looking inward at their own mental and emotional states.

Part 2: The Problem of Sensations

-

Can you trust your senses? The presentation challenges our reliance on our senses. It references optical illusions (like train tracks appearing to converge or a pencil bending in water) to show how easily our eyes are tricked.

-

Visual Maps: Using the example of an infrared camera vs. a normal camera, it explains that our vision is just one "map" or color code of reality. If humans only saw infrared, our reality would look completely different.

-

The Thermal Experiment: To prove even touch is deceptive, it describes an experiment where one hand is put in hot water and the other in cold water. When both are moved to room-temperature water, the hand from the hot water feels cold, and the hand from the cold water feels hot.

-

Thus, finally, supporters of these arguments would ask: what if our sensations are being tricked right now, by tricks that are too good to be discovered ?

-

The Truman Show Analogy: The lecture uses the movie The Truman Show (where a man discovers his entire life is a fake TV set) to illustrate how someone might start to doubt their entire reality.

Part 3: Descartes and "I Think Therefore I Am"

-

Radical Doubt: The philosopher René Descartes decided to doubt everything—his intentions, the real world, and even whether demons were controlling his mind with realistic illusions.

-

The Conclusion: He realized that the only thing he couldn't doubt was that he was thinking. Therefore, he must exist as a "thinking thing." This led to the famous quote: "I think, therefore I am".

-

Modern Equivalents: The lecture compares Descartes' demon analogy to being trapped in a hyper-realistic Virtual Reality (VR) simulation or The Matrix, asking if someone born into VR could ever know the difference.

Part 4: The "Other Minds" Problem

-

Subjective Reality: If we can only be sure of our own minds, how do we know other organisms have minds like ours?

-

The Color Red: The lecture asks: "Is your 'Red' the same as my 'Red'?" The brain assigns a color code to specific wavelengths of light, but we can never truly prove that the "red" you see in your mind looks the same as the "red" someone else sees.

-

Zombies and Comas: How do we know what it feels like to be someone else? The lecture uses "Philosophical Zombies" (creatures that look/act human but lack consciousness), patients in comas, and even bats (who "see" using sound) to ask how we can truly understand the inner consciousness of another being.

Part 5: The Mind as a Computer (The IPO Model)

-

The lecture shifts to comparing the human mind to a computer using the IPO (Input-Processing-Output) model.

-

Example (Calculator): Input = pressing 6 and 5. Processing = Addition. Output = Displaying 11 on the screen.

- IPO Stands for:

- Input: A “requirement from the environment”

- Processing: A “computation” 1 based on the input information

- Output: A “provision for the environment”

- Example (Human Learning): A baby receives raw audio (Input). The brain uses "unsupervised learning" to deduce grammar and vocabulary (Processing). The baby eventually says "Mama" (Output), Later the growing baby also learns using

- Supervised Learning (e.g. told that this is a ”banana”) and

- Reinforcement learning (e.g. when it learns that making certain funny sounds make ”Mama” smile.

Part 6: Android Epistemology & The Chinese Room

-

The Big Question: Does a computer actually understand the inputs it processes?

-

The Chinese Room Experiment: Imagine an English-speaker locked in a room with a rulebook. They receive questions in Chinese (Input), use the English rulebook to find the correct Chinese symbols to draw, and pass them back (Output). To the outside world, it looks like they speak Chinese, but they actually understand nothing.

-

The Lesson: Just like the person in the room, AI doesn't "understand" language or have human consciousness; it is simply executing instructions. Even if AI passes the Turing Test (tricking a human into thinking it is human), it doesn't mean it is truly thinking.

Part 7: AI in Medicine and CDSS

-

Evolution: AI has evolved from Rule-Based systems (Classical AI) to Machine Learning, and now Deep Learning (neural networks) and Generative AI.

-

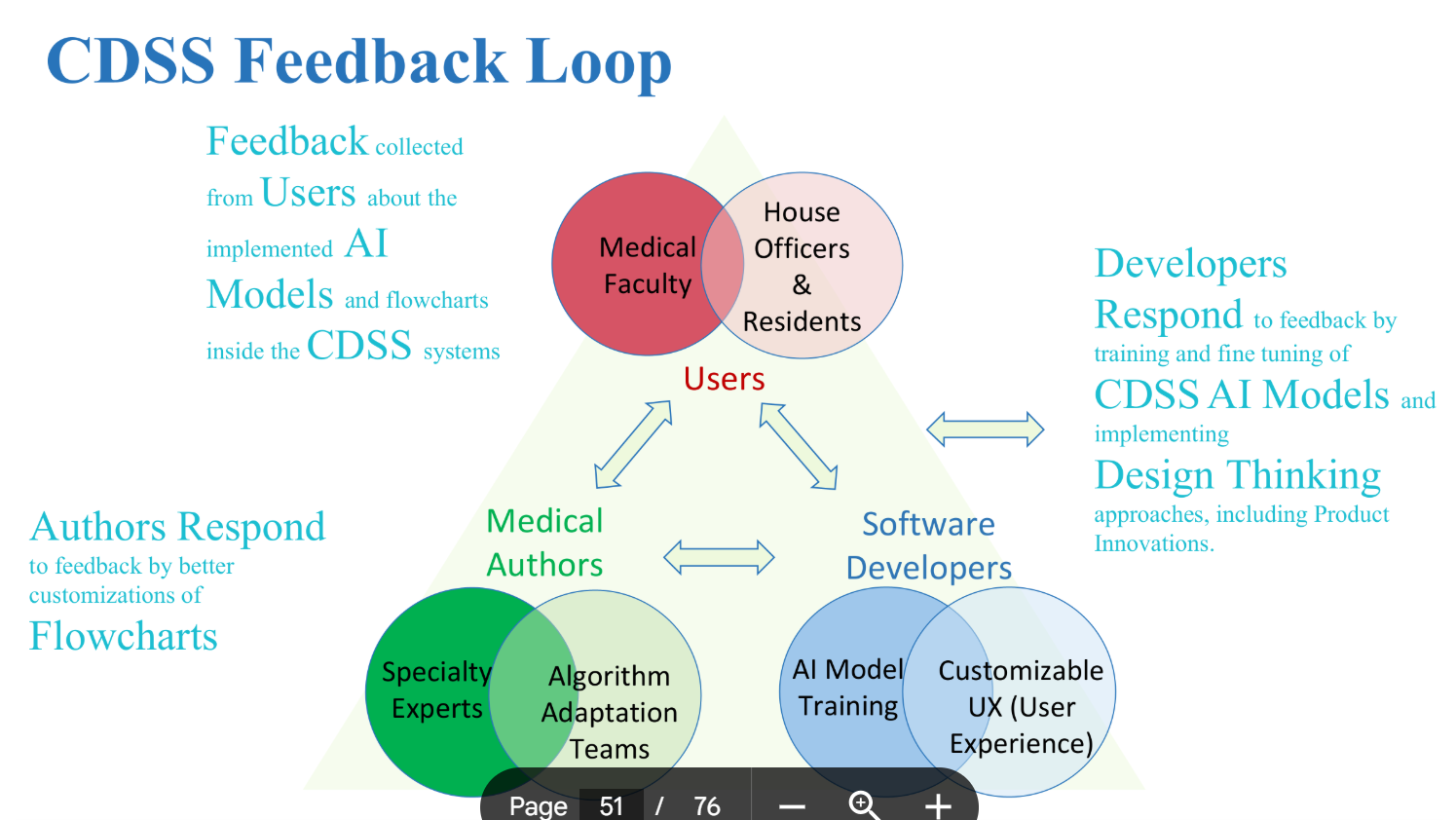

CDSS (Clinical Decision Support Systems): These systems take patient data (history, labs, symptoms) and use AI to automatically suggest diagnoses, investigations, and prescriptions.

-

While Large Language Models (LLMs) are great, sometimes simple Rule-Based flowcharts are better because they are predictable, cheaper to run, and can be customized to local geographical needs. The best systems combine rule-based flowcharts with specialized AI and LLMs, continuously improving through a "Feedback Loop" with doctors.

Part 8: Advanced Fields of AI

The lecture concludes by defining newer, human-imitating branches of AI:

-

Narrative AI: AI that can understand and generate stories (e.g., analyzing a patient's evolving medical history, or generating TV scripts like Grey's Anatomy).

-

Expressive AI: AI that intersects with Art, generating music, pictures (like Midjourney/DALL-E), or videos.

-

Interactionist AI: AI embedded in physical "bodies" (like robots or self-driving cars) or virtual avatars that interact with their environment.

-

Theory of Mind AI: The ultimate, currently theoretical goal. This would be an AI that truly understands that other beings have their own separate minds, emotions, and intentions.