The final lecture introduces Uncertainty Management in Rule-based Expert Systems, moving away from exact mathematics and into how systems handle ambiguity.

In classical logic, knowledge is assumed to be perfect: a statement is either exactly TRUE or exactly FALSE. However, most real-world problems do not provide clear-cut facts. Uncertainty is defined as the lack of exact knowledge needed to reach a perfectly reliable conclusion.

Sources of Uncertain Knowledge

Expert systems must navigate several sources of ambiguity:

-

Weak Implications: It is often difficult to establish concrete correlations between the "IF" condition and the "THEN" action of a rule.

-

Imprecise Language: Human language is inherently ambiguous, using vague terms like "often," "sometimes," or "frequently".

-

Unknown Data: When data is missing, the system must accept an "unknown" value and proceed with approximate reasoning.

-

Combining Expert Views: Large systems rely on multiple experts who frequently have contradictory opinions and conflicting rules.

Certainty Factors (CF) Theory

To handle this uncertainty, the lecture introduces Certainty Factors, an approach originally developed for the MYCIN expert system.

A Certainty Factor (cf) measures an expert's degree of belief in a hypothesis.

-

It operates on a scale from -1.0 (Definitely False / maximum disbelief) to +1.0 (Definitely True / maximum belief).

-

A value of 0 generally represents an "Unknown" state.

When a rule fires, the certainty factor assigned to its conclusion is propagated through the reasoning chain. Here is how the mathematics work for different rule structures:

1. Single Premise Rules

If a rule relies on a single piece of evidence, you simply multiply the certainty of the evidence by the certainty assigned to the rule.

2. Conjunctive (AND) Rules

When a rule requires multiple conditions to be true (e.g., IF A is true AND B is true), the system takes the minimum certainty factor among all the conditions, and multiplies it by the rule's certainty.

3. Disjunctive (OR) Rules

When a rule requires only one of several conditions to be true (e.g., IF A is true OR B is true), the system takes the maximum certainty factor among the conditions, and multiplies it by the rule's certainty.

Combining Certainty Factors

The most complex part of this theory occurs when two different rules lead to the exact same conclusion. Common sense dictates that if two independent pieces of evidence support the same hypothesis, our confidence in that hypothesis should increase.

To calculate this, the system merges the individual certainty factors (

-

If both are positive (Incremental Belief):

-

If both are negative (Incremental Disbelief):

-

If they have opposite signs (Conflicting Rules):

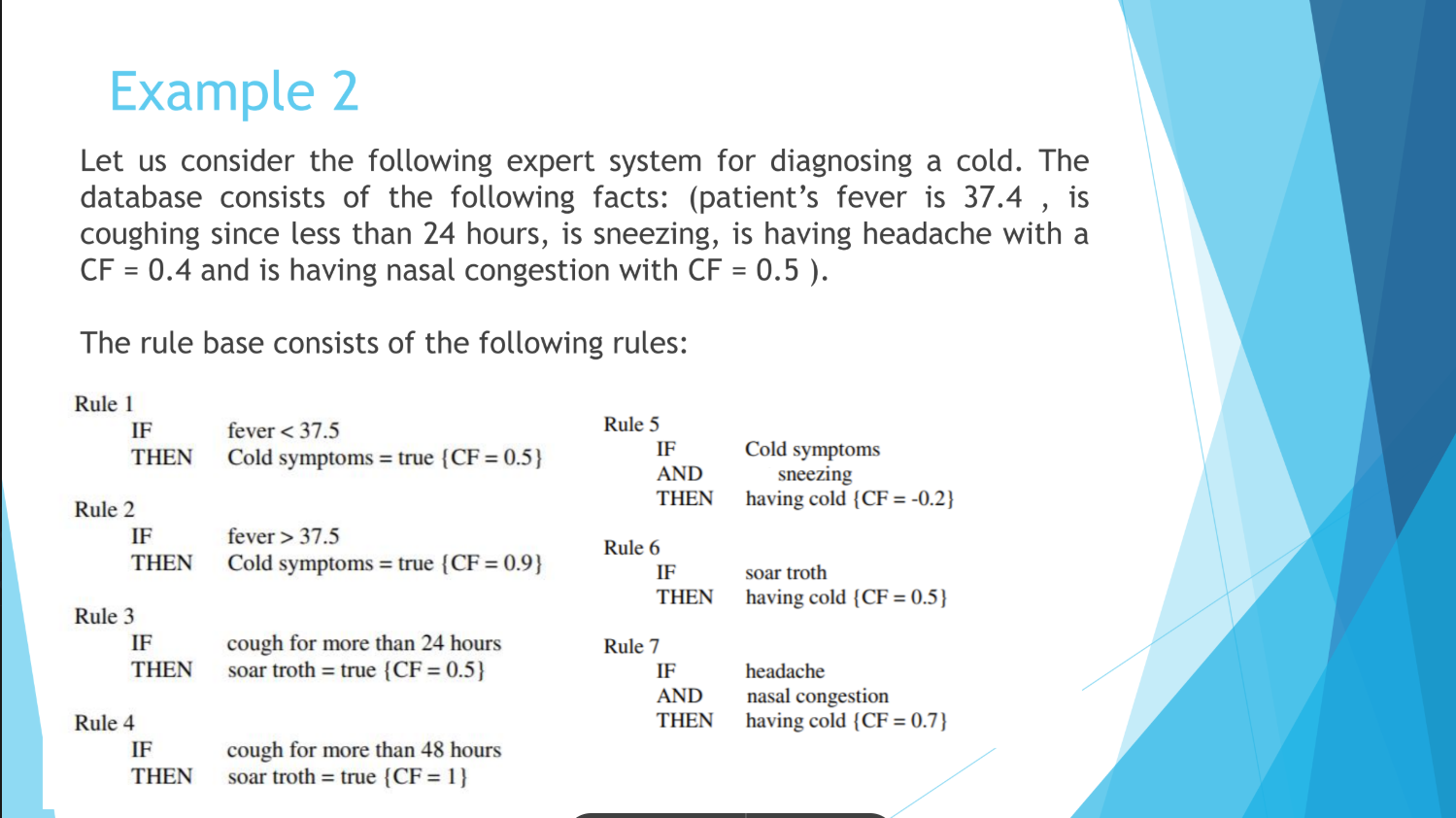

This is the step-by-step breakdown of how an expert system resolves the medical diagnosis tree in Example 2.

The goal of this network is to determine the final Certainty Factor (CF) for the hypothesis "HAVING COLD". The system does this by evaluating 7 interconnected rules based on patient symptoms.

(Note: Because the exact patient inputs are partially cut off in the document snippet, I will use a realistic set of assumed patient symptoms to demonstrate the exact mathematical mechanics the system uses to reach a conclusion).

Assumed Patient Evidence (Inputs):

-

= -

= -

= -

= -

= -

= -

=

Phase 1: Resolving the Intermediate Hypotheses

We now have two sub-conclusions to figure out: "soar troth" and "cold symptoms."

1. Calculate "soar troth" (Rules 3 & 4)

-

Rule 3: IF cough > 24h THEN soar troth

-

Rule 4: IF cough > 48h THEN soar troth

-

Combine (Incremental Belief):

(Intermediate

for "soar troth" = )

2. Calculate "cold symptoms" (Rules 1 & 2)

-

Rule 1: IF fever < 37.5 THEN cold symptoms

-

Rule 2: IF fever > 37.5 THEN cold symptoms

-

Combine (Incremental Belief):

(Intermediate

for "cold symptoms" = )

Phase 2: Evaluating the Final Hypothesis Rules

Now we evaluate the three rules that point to "HAVING COLD", using our newly calculated intermediate CFs where necessary.

-

Rule 5: IF cold symptoms AND sneezing THEN having cold

-

-

(Notice this rule creates disbelief because the CF is negative).

-

-

Rule 6: IF soar troth THEN having cold

-

-

Rule 7: IF headache AND nasal congestion THEN having cold

-

Phase 3: The Final Combination

We have three independent rules firing for the final diagnosis:

Merge 1: Combine the positive evidence (

Using the Incremental Belief formula:

Merge 2: Combine the positive result with the negative evidence (

Because we are combining a positive (

Conclusion

With the corrected tree structure, the system uses the conflicting evidence (the presence of sneezing combined with cold symptoms slightly reduces the likelihood of a standard cold in this specific rule base) to temper its final diagnosis. The final Certainty Factor for "HAVING COLD" is