Introduction to Neuro-Fuzzy Systems

The lecture focuses on how to combine Neural Networks (NN) with Fuzzy Logic to develop a Neuro-Fuzzy System (NFS). The primary goal of an NFS is to improve the performance of a fuzzy reasoning tool (or fuzzy logic controller) by representing it using the learning structure of a neural network.

While you can combine these two concepts in different ways—such as a "Fuzzy Neural Network" where individual neurons use fuzzy set theory —the Neuro-Fuzzy System is far more popular and practical for solving real-world problems. The lecture specifically concentrates on an NFS based on the Mamdani Approach.

The 5-Layer Architecture of an NFS

An NFS using the Mamdani approach is structured into five distinct layers, each performing a specific function in the fuzzy inference process:

-

Layer 1 (Input Layer): This layer uses a linear transfer function. Its purpose is simply to receive the real crisp inputs and pass them forward. The outputs of this layer are exactly the same as the corresponding inputs.

-

Layer 2 (Fuzzification Layer): This layer takes the crisp inputs and determines their degree of belonging (membership) to different fuzzy linguistic terms (like "Near", "Far", "Small", "Medium"). It uses defined membership function distributions, such as right-angled or isosceles triangles.

-

Layer 3 (AND Operation Layer): This layer looks at the combinations of fuzzified inputs to form the antecedents ("If" parts) of the fuzzy rules. It performs logical AND operations, typically using the "minimum" mathematical function, to determine the firing strength of each combination.

-

Layer 4 (Fuzzy Inference Layer): This layer maps the activated combinations to their corresponding output consequences ("Then" parts) based on a predefined Rule Base. It outputs the set of fired rules along with their respective strengths.

-

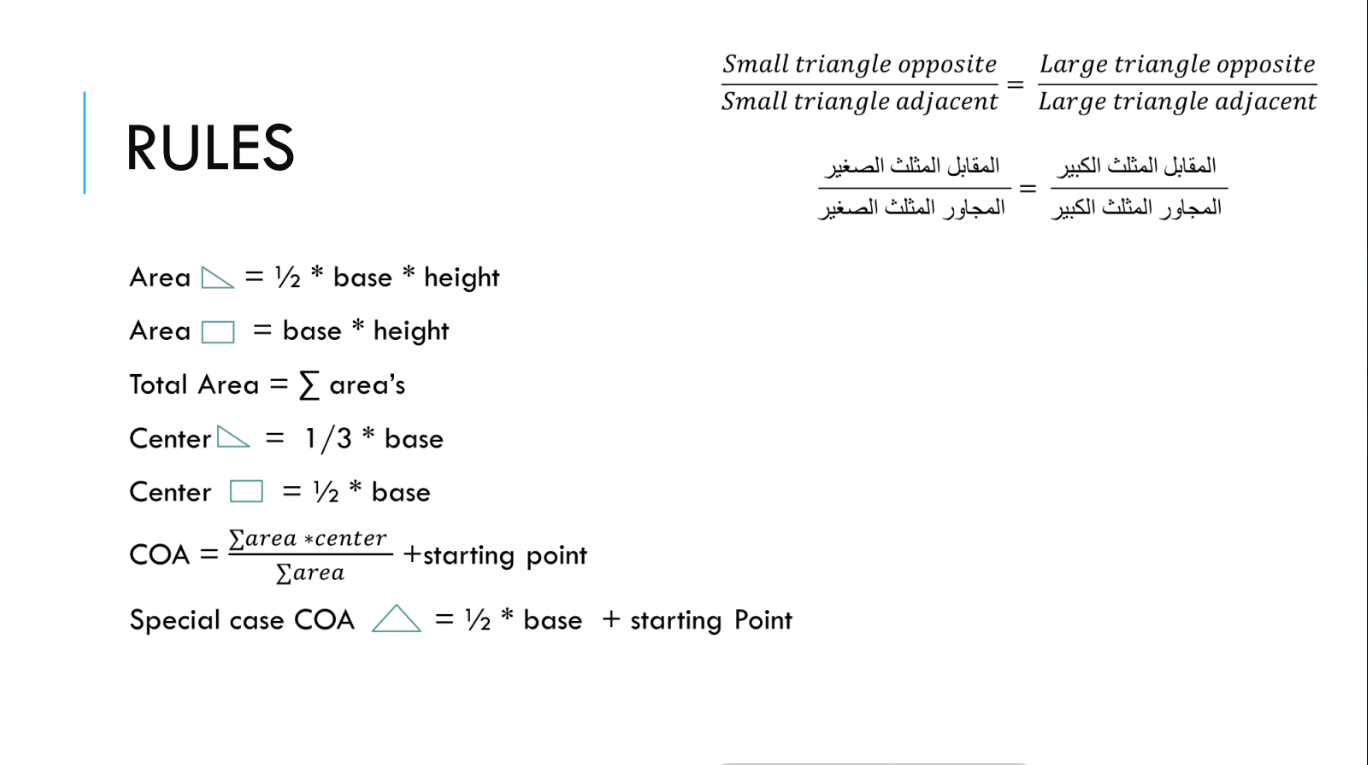

Layer 5 (Defuzzification Layer): This final layer aggregates the fuzzy outputs from Layer 4 and converts them back into a single, usable crisp number. The lecture highlights the Center of Sums Method for this calculation:

(Where

is the area of the respective fired rule shape, and is the center of that area).

To optimize this system, the network can be tuned using methods like Batch mode training, Backpropagation (BP) algorithms, or Genetic Algorithms (GA).

Step-by-Step Numerical Example Walkthrough

The bulk of the lecture is dedicated to a numerical example to calculate the prediction deviation of the system.

The Setup:

- Inputs:

, - Target Output:

- Linguistic Terms:

: Near (NR), Far (FR), Very Far (VFR). : Small (SM), Medium (M), Large (LR). - Output

: Low (LW), High (H), Very High (VH).

Here is how the data flows through the 5 layers:

Layer 1

The inputs are passed directly through.

Layer 2 (Fuzzification)

We determine where the inputs fall on the modified triangular membership graphs.

- For

: It falls between the "Near" (NR) and "Far" (FR) triangles. Based on the graph slopes, the calculated membership values are: - For

: It falls between the "Small" (SM) and "Medium" (M) triangles.

Layer 3 (AND Operation)

Because we have 2 non-zero states for

- NR AND SM:

- NR AND M:

- FR AND SM:

- FR AND M:

Layer 4 (Fuzzy Inference)

Using the colored Rule Base grid from the slides, we map the 4 activated combinations to their specific output consequence and attach the strengths calculated in Layer 3:

- If

is NR AND is SM is LW (Strength: 0.25) - If

is NR AND is M is LW (Strength: 0.25) - If

is FR AND is SM is H (Strength: 0.272727) - If

is FR AND is M is H (Strength: 0.727272)

Layer 5 (Defuzzification)

We now have four "fired" fuzzy shapes. Because the strengths act as a ceiling, the triangular output shapes are cut off (truncated) at their respective strength levels, turning them into trapezoids (or smaller shapes).

Using geometric formulas (as shown in the handwritten notes), the area (

- Rule 1 (LW):

, - Rule 2 (LW):

, - Rule 3 (H):

, - Rule 4 (H):

,

Finally, the Center of Sums formula is applied to these values:

The Defuzzification Layer (Layer 5) is the critical final step in a Neuro-Fuzzy System. Its entire purpose is to translate the fuzzy, linguistic conclusions reached by the network back into a concrete, real-world number that a system can actually use.

If a fuzzy controller is managing an air conditioner, the rules might determine that the cooling fan needs to spin at a speed that is a combination of "Medium" and "Fast." The defuzzification layer calculates exactly how many Revolutions Per Minute (RPM) that combination translates to.

1. The Hand-off from Layer 4 (Clipping)

Before Layer 5 can do its math, it receives the "fired" rules from Layer 4.

Each fired rule has a specific strength (a number between 0 and 1 calculated by the AND operations). This strength acts as a horizontal blade that slices off the top of the output membership triangles.

-

If a rule has a strength of

0.25, the output triangle is truncated horizontally at the0.25mark on the y-axis. -

The original triangle is transformed into a trapezoid. The stronger the rule fires, the taller the resulting trapezoid.

2. The Geometric Breakdown

Layer 5 looks at each of these resulting shapes (the trapezoids) and calculates two specific properties for each one:

-

Area (

): How much "weight" or influence this specific rule should have on the final decision. A taller, wider shape has more area and therefore more pull. -

Center of Area (

): The balance point (centroid) of that specific shape on the x-axis.

(Note: Calculating the area and centroid of a trapezoid requires breaking it down geometrically, which is why your lecture notes show specific equations for finding these values based on the base widths and the cutoff heights).

3. The Center of Sums Calculation

Once Layer 5 has the Area (

Why use Center of Sums? There are other defuzzification methods (like the Center of Gravity method), but Center of Sums is highly popular in Neuro-Fuzzy Systems because it is computationally faster. Instead of trying to calculate the complex mathematical union of overlapping shapes, Center of Sums simply adds the areas together, even if they overlap. This speed is crucial when training the network through many iterations.

Final Calculation

The final step is to find the deviation in prediction. We subtract our predicted output from the known target output:

- Target Output =

- Predicted Output =

- Deviation: