To solve a problem, an agent needs a formalized model. The slides break down this specific vacuum environment into several key components:

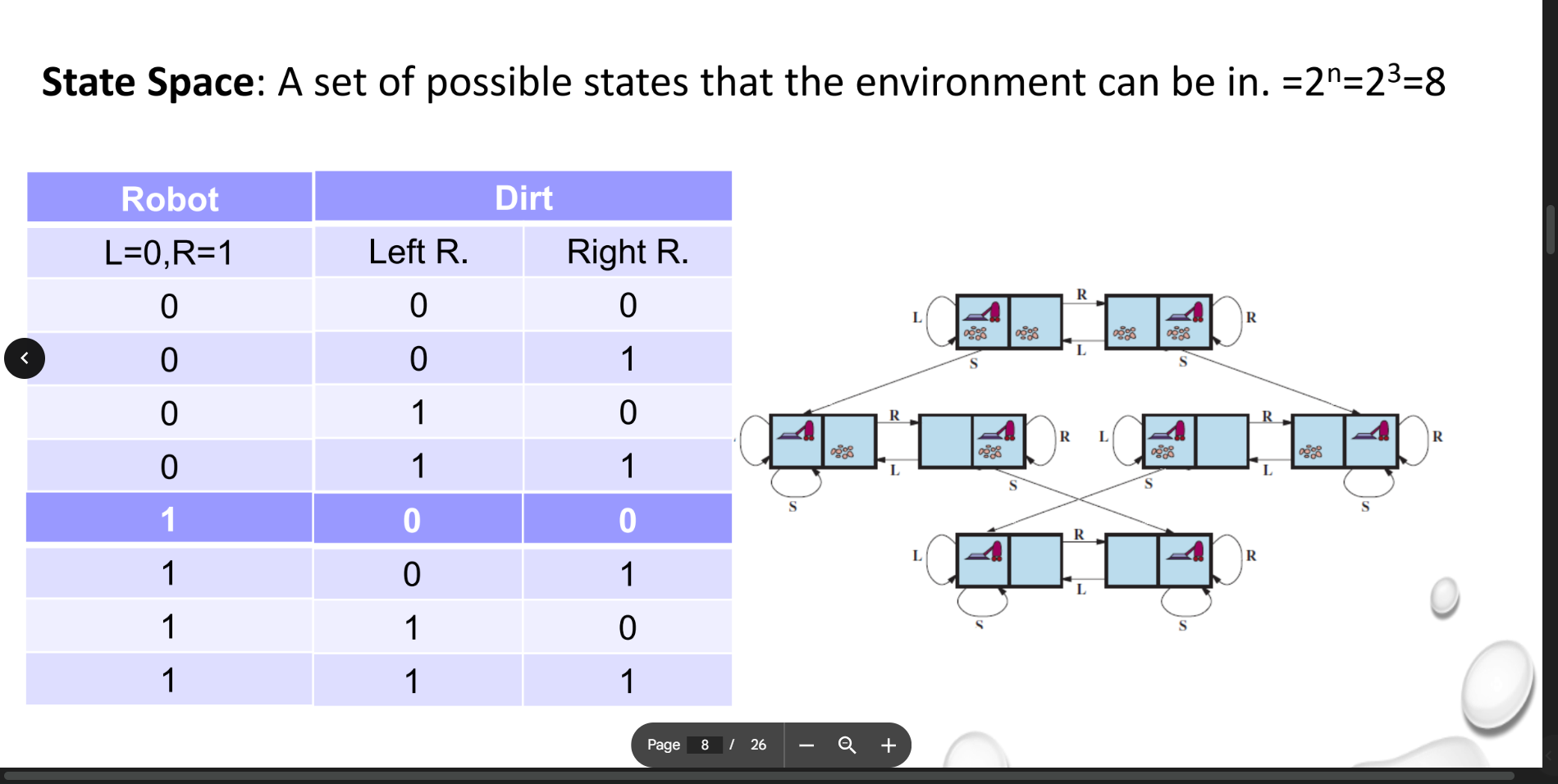

1. The State Space

The state space represents every possible configuration the environment can possibly be in. For the two-cell vacuum world, a state is defined by three variables:

-

Robot Location: Is it in the Left room (0) or the Right room (1)?

-

Dirt in Left Room: Is it clean (0) or dirty (1)?

-

Dirt in Right Room: Is it clean (0) or dirty (1)?

Because there are three distinct variables that each have two possible conditions, the total number of states in this mathematical model is calculated as

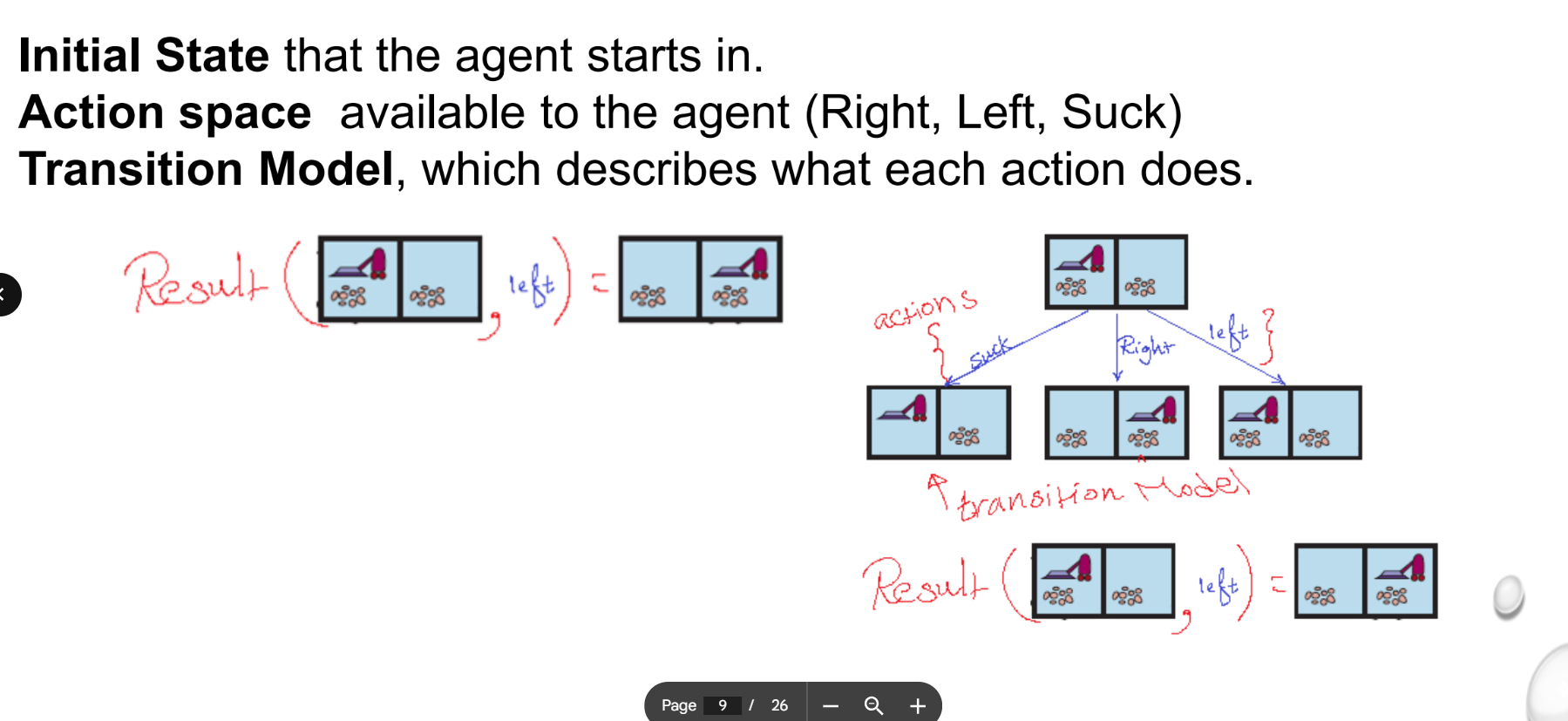

2. The Action Space

This defines what the agent is actually capable of doing in any given state. In this environment, the agent has three available actions:

-

Move Left (L)

-

Move Right (R)

-

Suck dirt (S)

3. The Transition Model (Successor Function)

The transition model (represented by the arrows in the state-space graph) describes the exact effect of each action. It defines what the "next state" will be after an action is taken.

-

For example, if the agent is in a state where there is dirt in its current room, applying the "Suck" action transitions the environment to a new state where that room is now clean.

-

If the agent is in the right room and applies the "Move Left" action, it transitions to a state where the robot's location is now the left room.

4. Goal States and Action Costs

To evaluate how well the agent is doing, two final components are needed:

-

Goal States: The specific state (or states) the agent is trying to achieve. In the vacuum world, this is typically the state where both rooms have 0 dirt.

-

Action Cost: A numerical penalty applied for taking actions, which could represent distance, power consumption, or time.

Example of three-cell vacuum world

To find the total number of possible states in this vacuum-cleaner world, we need to consider two independent variables: the location of the vacuum cleaner and the status of the rooms.

1. Vacuum Cleaner Location

The vacuum cleaner can be in any one of the three rooms at a given time.

-

Locations: Room A, Room B, or Room C.

-

Total possibilities: 3

2. Room Status (Clean/Dirty)

Each of the three rooms has two possible conditions: it is either Dirty or Clean. Since the status of one room does not depend on the others, we calculate the combinations for the rooms using

-

Status per room: 2 (Dirty or Clean)

-

Number of rooms: 3

-

Total status combinations:

3. Total State Space

To find the total number of distinct states, we multiply the number of possible vacuum locations by the number of possible room configurations:

There are 24 unique states in this environment.

Break Down of the 8 Room Configurations

If we labels the rooms 1, 2, and 3 (where D = Dirty and C = Clean), the 8 possible "world" configurations are:

-

D, D, D

-

D, D, C

-

D, C, D

-

D, C, C

-

C, D, D

-

C, D, C

-

C, C, D

-

C, C, C

Each of these 8 configurations can exist while the vacuum is in Room 1, Room 2, or Room 3, resulting in the

To map out the transition model, we define the Actions the agent can take and how those actions move the agent from one state to another. In a standard vacuum-world problem, the agent typically has three primary actions: Left, Right, and Suck.

1. The Transition Rules

A transition is defined as

-

Left / Right: These actions change the Location variable.

-

If the agent is in Room 1 and moves Left, it typically stays in Room 1 (hitting a "wall").

-

If it is in Room 1 and moves Right, it transitions to Room 2.

-

-

Suck: This action changes the Status variable of the current room only.

-

If the current room is Dirty, it becomes Clean.

-

If the current room is already Clean, the state remains unchanged.

-

2. State-Space Graph

A state-space graph visualizes these 24 states as nodes and the actions as edges (arrows) connecting them.

3. Example Transition Path

Let's look at a subset of the transitions starting from a completely dirty world:

| Current State (Loc,R1,R2,R3) | Action | Resulting State (Loc,R1,R2,R3) |

|---|---|---|

| Suck | ||

| Right | ||

| Suck | ||

| Right | ||

| Suck |

4. Complexity and Search

In AI terms, the Goal Test for this agent is to reach any state where all rooms are "Clean," regardless of where the vacuum ends up. Since there are 3 possible locations for the vacuum in a clean world, there are 3 Goal States out of the 24 total states.

Rest of Lecture

1. Evaluating Search Algorithms

Once a search problem is converted from a state-space graph into a search tree, the algorithm systematically explores the "fringe" (the unexpanded nodes). To determine if a specific search algorithm is good, we evaluate it based on four performance metrics:

-

Completeness: Is the algorithm mathematically guaranteed to find a solution if one actually exists? Will it correctly report failure if no solution exists?

-

Optimality: Does it find the best solution? (i.e., the one with the lowest path cost among all possible solutions).

-

Time Complexity: How long does it take to find the solution? In AI, this isn't just measured in seconds, but abstractly by the number of states and actions the algorithm has to consider.

-

Space Complexity: How much memory is required to keep track of the search tree during the process?

To calculate these time and space complexities, the lecture introduces three key mathematical parameters of the tree:

-

: The maximum branching factor (the maximum number of successors any given node can have). -

: The depth of the shallowest optimal solution. -

: The maximum depth of the entire state space (which could be infinite).

2. The Node Data Structure

To actually program a search algorithm, the agent needs a specific data structure (the "infrastructure") to build and keep track of the search tree in memory.

For every node

-

STATE: The specific configuration of the environment this node represents.

-

PARENT: A pointer to the previous node in the search tree that generated this current node. This is crucial for backtracking to find the final sequence of actions once the goal is reached.

-

ACTION: The specific move that was applied to the PARENT to generate this node.

-

PATH-COST: Traditionally denoted as

, this is the total cumulative cost of the path from the initial starting state all the way down to this specific node.

3. Midterm Exam Revision

Topics to Review:

-

Intelligent agents and Environment types.

-

Rationality and PEAS (Performance measure, Environment, Actuators, Sensors).

-

Agent types (Simple reflex, model-based, goal-based, utility-based).

-

Internal representations (Atomic, Factored, Structured).

-

Graphs vs. Trees and problem solving using search strategies.

Exam Format:

The exam will consist of True/False questions, Multiple Choice Questions (MCQs), and an applied case study. For the case study, you will be expected to analyze a scenario using the PEAS framework and choose/justify the most appropriate agent type for that specific problem.