Mechanics of Spatial Filtering

While point processing (intensity transformation) operates on a

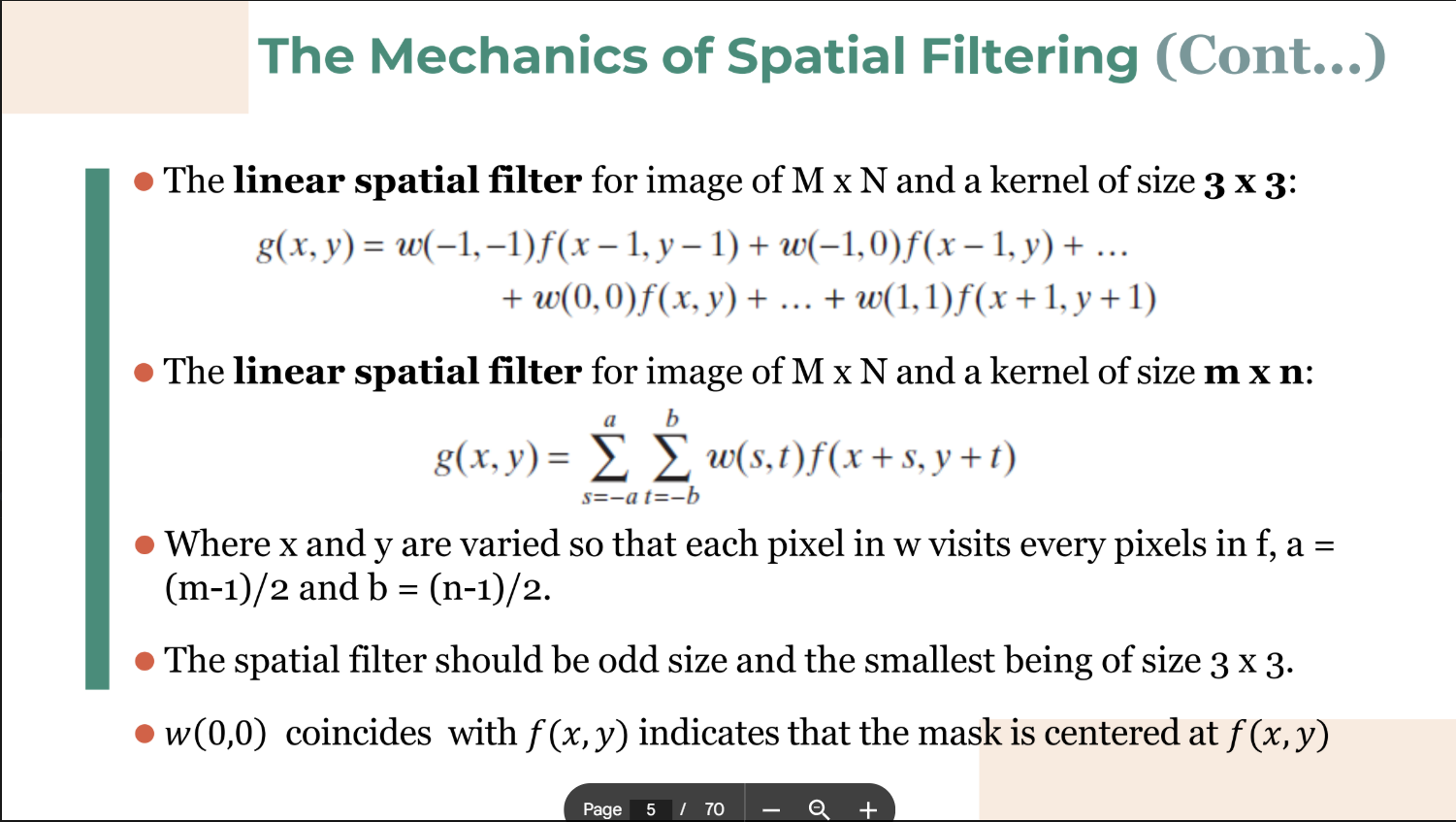

- The Process: The spatial filter mask is moved from point to point across the image. At each coordinate

, a predefined operation is performed on the image pixels within the neighborhood, generating a new pixel value for the center coordinate.

- Categories:

- Linear Spatial Filtering: Involves multiplying pixels by corresponding mask coefficients and summing the results (Convolution/Correlation).

- Nonlinear Spatial Filtering: Operates based on ranking or ordering pixel values within the neighborhood.

- Linear Spatial Filtering: Involves multiplying pixels by corresponding mask coefficients and summing the results (Convolution/Correlation).

Convolution Output Size Formula

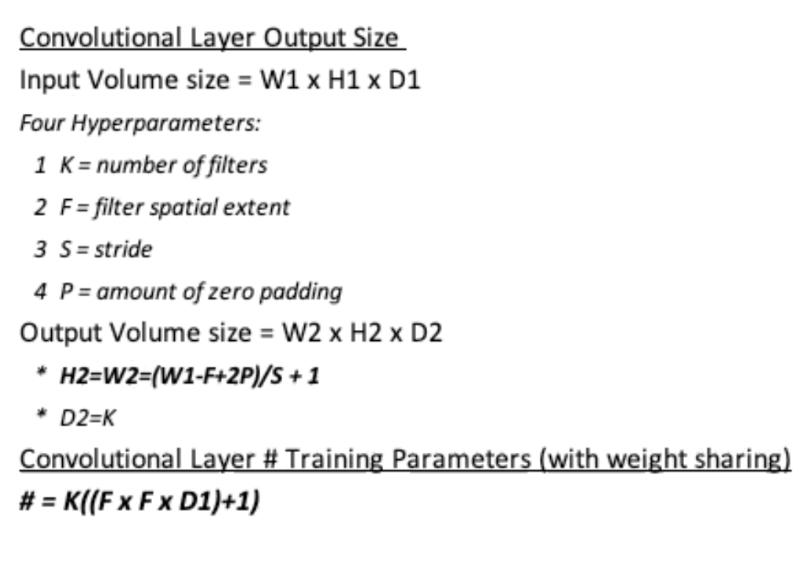

When applying a filter, the spatial dimensions of the output image can be calculated using the following formula:

-

: Output dimension (Width or Height) -

: Input dimension -

: Filter (Kernel) size -

: Padding applied to the input -

: Stride (step size of the filter)

What Happens at the Borders?

-

The mask falls outside the edge!

-

Solutions?

- Ignore the edges: The resultant image is smaller than the original

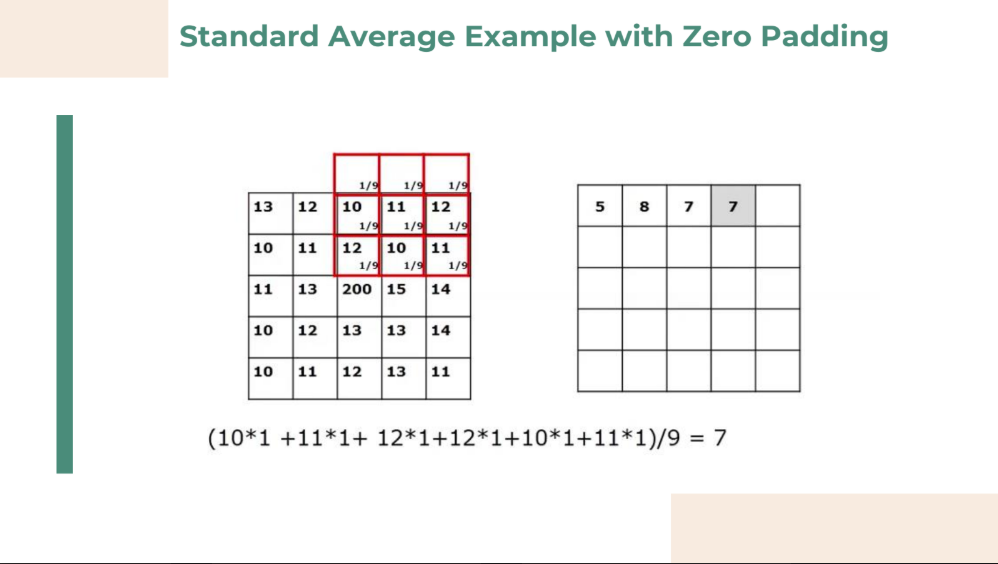

- Pad with zeros: Introducing unwanted artifacts

- Repeat rows and columns: Pad with adjacent rows and columns

2. Smoothing Spatial Filters

Smoothing filters are used for blurring (a preprocessing step to remove small details or bridge gaps in lines) and for noise reduction.

A. Linear Smoothing Filters (Averaging)

These filters replace the value of every pixel with the average of the intensity levels in its neighborhood.

-

Effect: Reduces "sharp" transitions in intensities. Because random noise typically consists of sharp transitions, averaging effectively reduces noise. However, edges are also sharp transitions, so this causes undesirable edge blurring.

-

Characteristics:

- The elements of the mask must be positive.

- Sum of mask elements is 1

-

Types of Masks:

-

Box Filter: All coefficients in the mask are equal (typically

). The sum is divided by the total number of pixels in the mask (e.g., for a mask). -

Weighted Average Filter: Pixels closer to the center of the mask are given higher weight (importance) than those further away, which helps reduce blurring compared to a standard box filter.

-

B. Order-Statistic Filters (Nonlinear)

These filters replace the center pixel based on the ordering (ranking) of the pixels in the image area encompassed by the filter.

-

Median Filter: Replaces the value of a pixel with the median of the intensity levels in the neighborhood.

- Application: Provides excellent noise-reduction for impulse noise (salt-and-pepper noise) with considerably less blurring than linear smoothing filters.

-

Max Filter: Replaces the pixel with the maximum value in the neighborhood. Useful for finding the brightest points (removes "pepper" noise).

-

Min Filter: Replaces the pixel with the minimum value in the neighborhood. Useful for finding the darkest points (removes "salt" noise).

3. Sharpening Spatial Filters

The objective of sharpening is to highlight transitions in intensity (edges, boundaries). Because smoothing is achieved by spatial averaging (integration), sharpening is achieved by spatial differentiation.

- Characteristics:

- The elements of the mask contain both positive and negative weights.

- Sum of the mask weights is 0

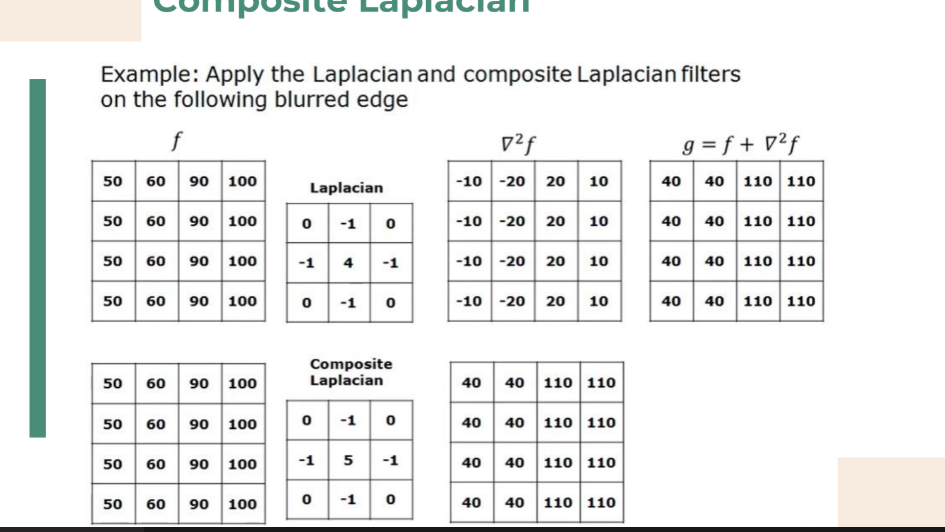

A. The Laplacian (Second Derivative)

The Laplacian is an isotropic (rotation-invariant) operator that calculates the second derivative of the image to find rapid changes in intensity.

Mathematical Definition:

Discrete Approximation Masks:

Common

Image Enhancement with the Laplacian:

Because the Laplacian highlights edges but zeroes out flat areas (losing the background), the sharpened image is usually added back to the original image to restore the background information.

(Where

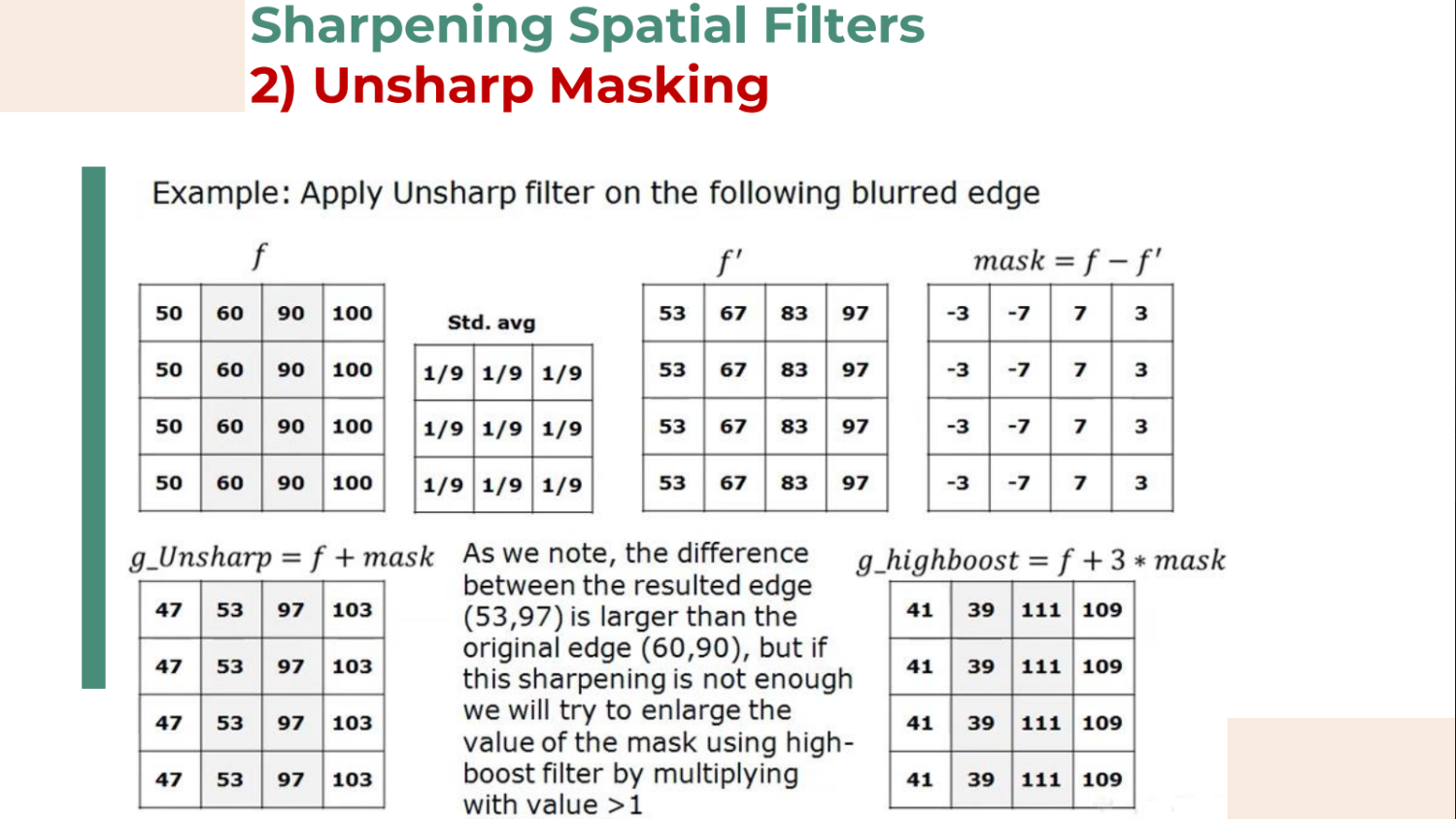

B. Unsharp Masking & Highboost Filtering

This is a classic technique used in the publishing industry, consisting of three steps:

-

Blur the original image:

-

Subtract the blurred image from the original to create a mask:

-

Add the mask back to the original image:

-

: Unsharp masking. -

: Highboost filtering (increases the contribution of the edges).

C. The Gradient (First Derivative)

First derivatives are primarily used to extract edges (rather than just enhance the whole image). The gradient of an image

Gradient Magnitude:

To calculate the strength of the edge, we find the magnitude. In image processing, this is often approximated using absolute values for computational speed:

Common Gradient Operators:

-

Roberts Cross-Gradient: Uses

masks to compute diagonal differences. -

Sobel Operators: Uses

masks. They compute (horizontal changes) and (vertical changes). Using a neighborhood provides a slight smoothing effect, making the Sobel operators less sensitive to noise than the Roberts operators.

Too see full comparison and summary for Ch3, here