HMM Numerical Example

The Scenario Setup

Imagine a robot that is stationed inside a building without any windows. Its goal is to determine the hidden state of the world: Is it Raining (

However, the robot can observe "evidence." In this case, the robot can see if people walking into the building are carrying Umbrellas (

To solve this, the HMM uses two distinct probability models:

-

The Transition Model (

): How weather behaves over time. -

If it rained yesterday, there is a 70% chance it rains today:

. -

If it did not rain yesterday, there is a 30% chance it starts raining today:

.

-

-

The Sensor/Observation Model (

): How the weather influences people's behavior. -

If it is raining, there is a 90% chance people carry umbrellas:

. -

If it is not raining, there is still a 20% chance people carry umbrellas (perhaps to block the sun):

.

-

The Initial Belief: The robot starts with a prior belief that there was a 50% chance of rain yesterday, meaning

Here is how the robot calculates the updated probability that it is currently raining, denoted mathematically as

Step 1: Prediction (The Time Update)

Before the robot even looks at the umbrellas, it must forecast today's weather based purely on yesterday's belief and how weather naturally transitions. It does this using the Law of Total Probability to sum up all possible ways it could rain today.

Expanded, this means the probability of rain today is the sum of two scenarios: (1) it rained yesterday and continued, OR (2) it didn't rain yesterday but started today.

Plugging in the numbers from the models:

So, based purely on time passing, the robot believes there is a 50% chance of rain today. This also inherently means there is a 50% chance of no rain today (

Step 2: The Update (Using the Observation)

Now, the robot looks at the door and observes an umbrella (

The formula introduces an unnormalized constant,

The robot calculates the "unnormalized" likelihoods for both possible realities (Rain vs. No Rain):

Reality 1: It is raining.

Reality 2: It is NOT raining.

Step 3: Normalization

In probability, the total chance of all possible states must equal exactly 1 (or 100%). Currently, our unnormalized values (

We find

Finally, we apply this normalization factor to our "Rain" calculation to get the true percentage:

The Conclusion: Before seeing the umbrella, the robot thought there was a 50% chance of rain. After seeing the umbrella—because the sensor model tells the robot that umbrellas are a very strong indicator of rain—its confidence skyrocketed to approximately 81.8%.

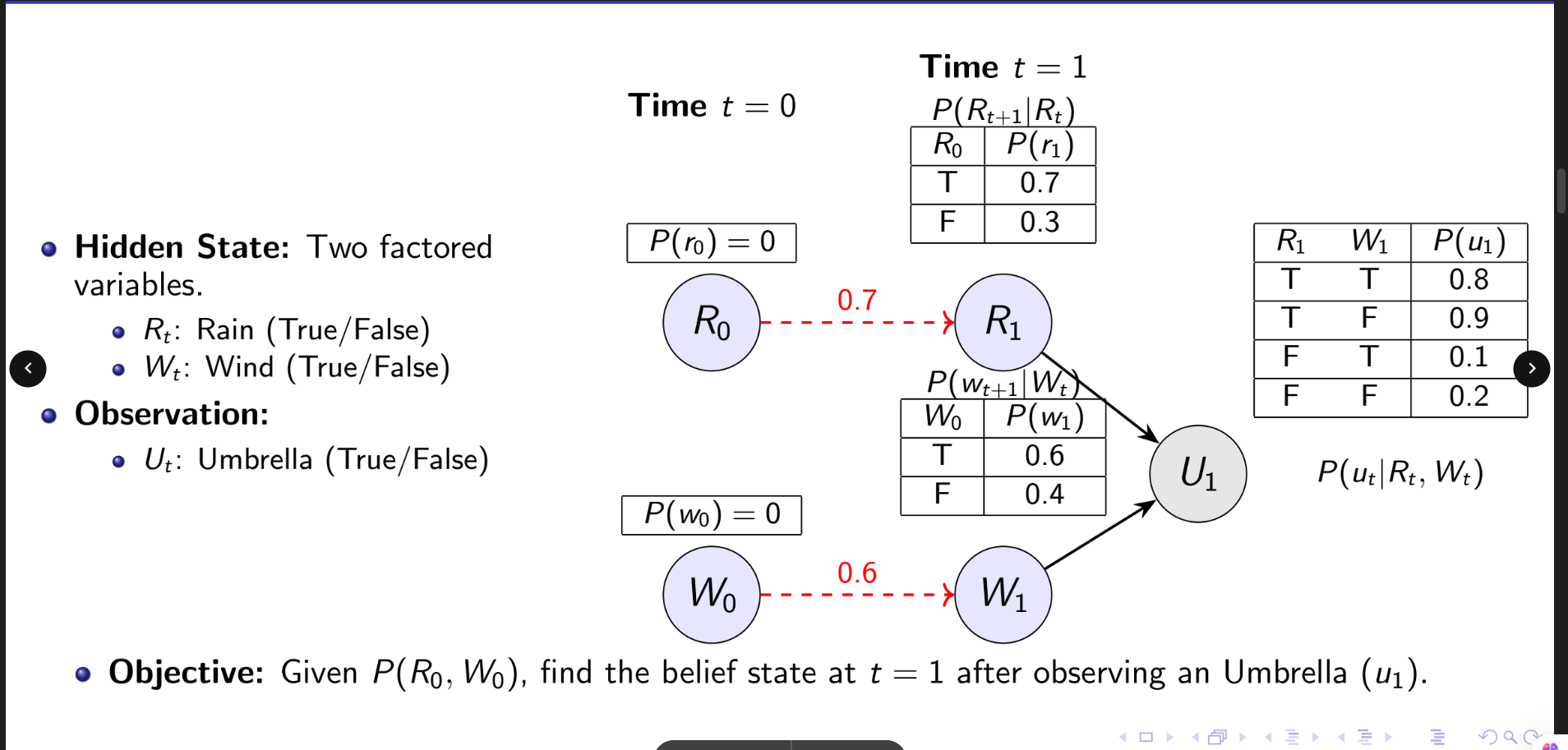

Dynamic BN Example

The Scenario Setup

The DBN example builds on the HMM scenario but introduces a factored representation. Instead of a single hidden variable, the true state of the weather is split into two independent variables: Rain (

The robot still observes Umbrellas (

The Initial Belief (

The robot starts with absolute certainty that it is neither raining nor windy.

-

. -

.

The Transition Models: Rain and wind evolve over time completely independently of one another.

-

Rain: If it did not rain yesterday, there is a 30% chance it starts today (

). -

Wind: If it was not windy yesterday, there is a 40% chance it gets windy today (

).

The Observation Model (

-

Rain + Wind:

(Wind might break umbrellas, slightly lowering usage). -

Rain + No Wind:

. -

No Rain + Wind:

. -

No Rain + No Wind:

.

Step 1: Prediction (

Because the robot knew with 100% certainty that yesterday was clear and calm (

First, calculate the individual probabilities for today:

-

. -

.

Next, because the transitions are independent, you multiply them to find the "Joint Prior" representing the four possible realities for today's weather:

-

Rain & Wind (

): . -

Rain & No Wind (

): . -

No Rain & Wind (

): . -

No Rain & No Wind (

): .

Step 2: The Update (Using the Observation)

The robot now observes an Umbrella (

This creates the unnormalized posterior (

-

Reality 1 (

): . -

Reality 2 (

): . -

Reality 3 (

): . -

Reality 4 (

): .

Step 3: Normalization & Marginalization

To convert these unnormalized values into true probabilities, they must be scaled so they sum to 1.

First, sum the unnormalized values:

.

Next, divide each unnormalized value by the sum (

-

. -

. -

. -

.

Finally, the robot wants to answer its core question: Is it raining? To find the overall probability of rain, it performs marginalization. It sums the probabilities of the two possible realities where it is raining, effectively factoring the wind variable out of the final equation:

.

The Conclusion

At time